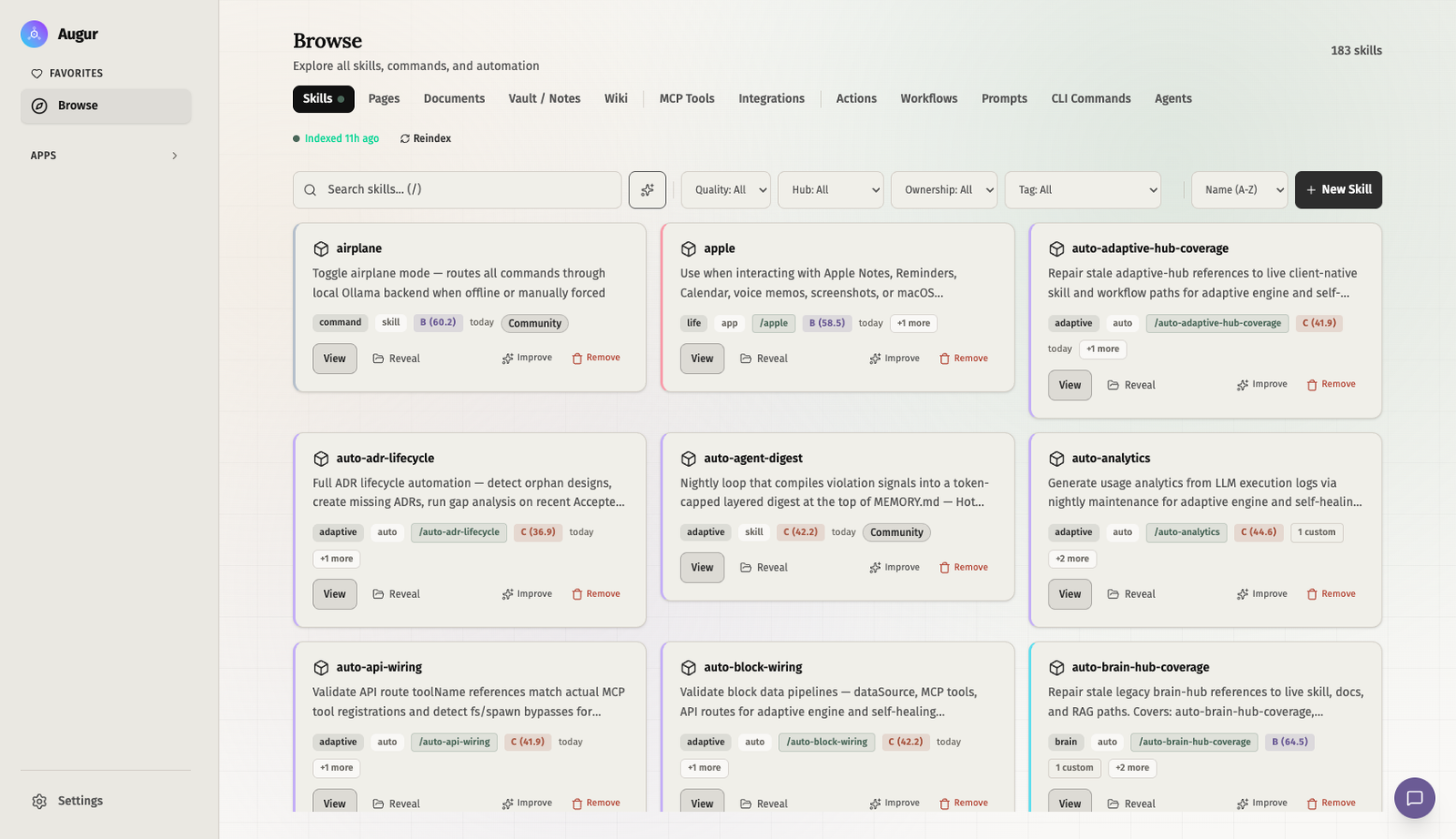

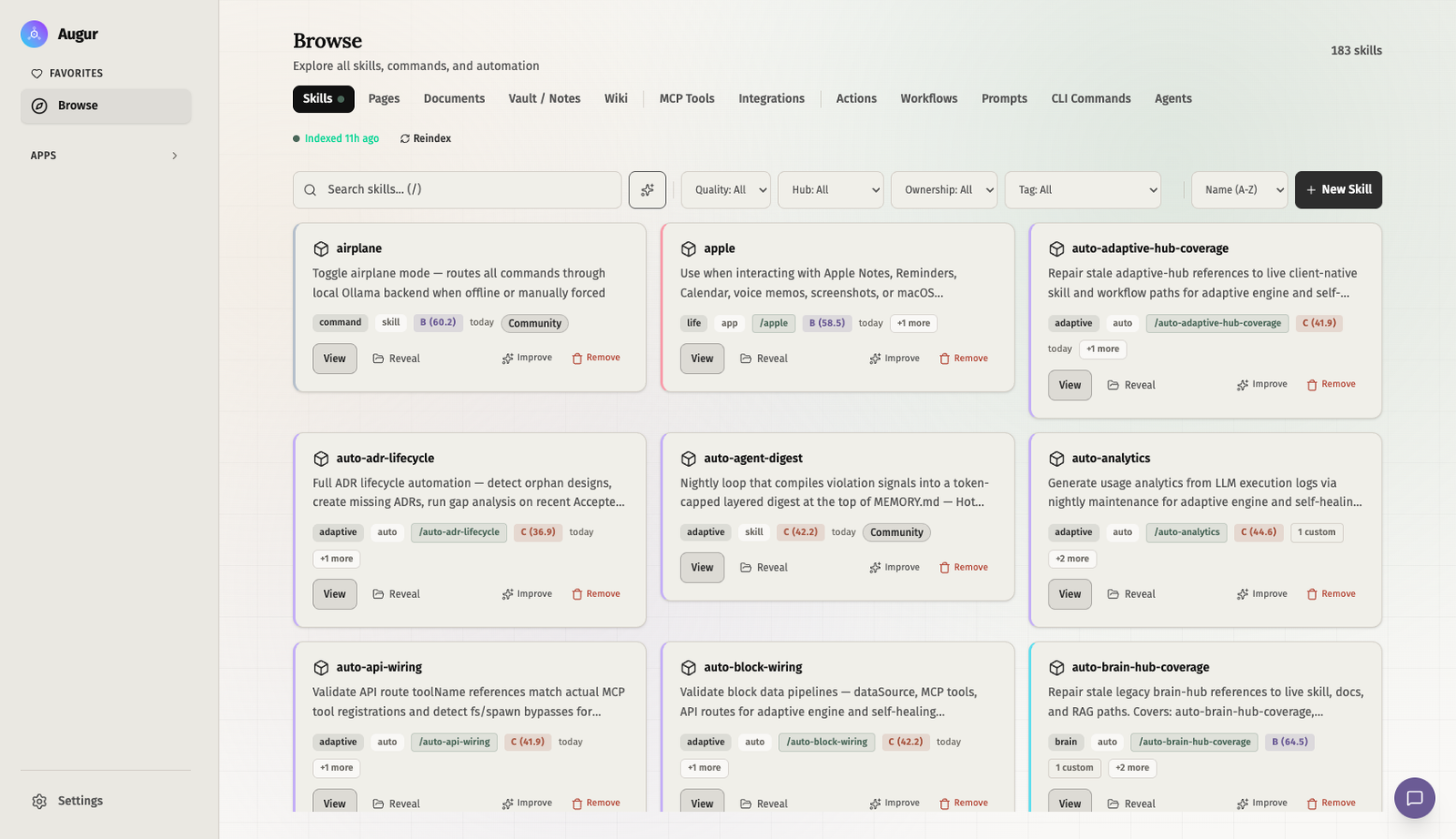

Browse

Inspect local skills, commands, client surfaces, documents, and compounding state from one place.

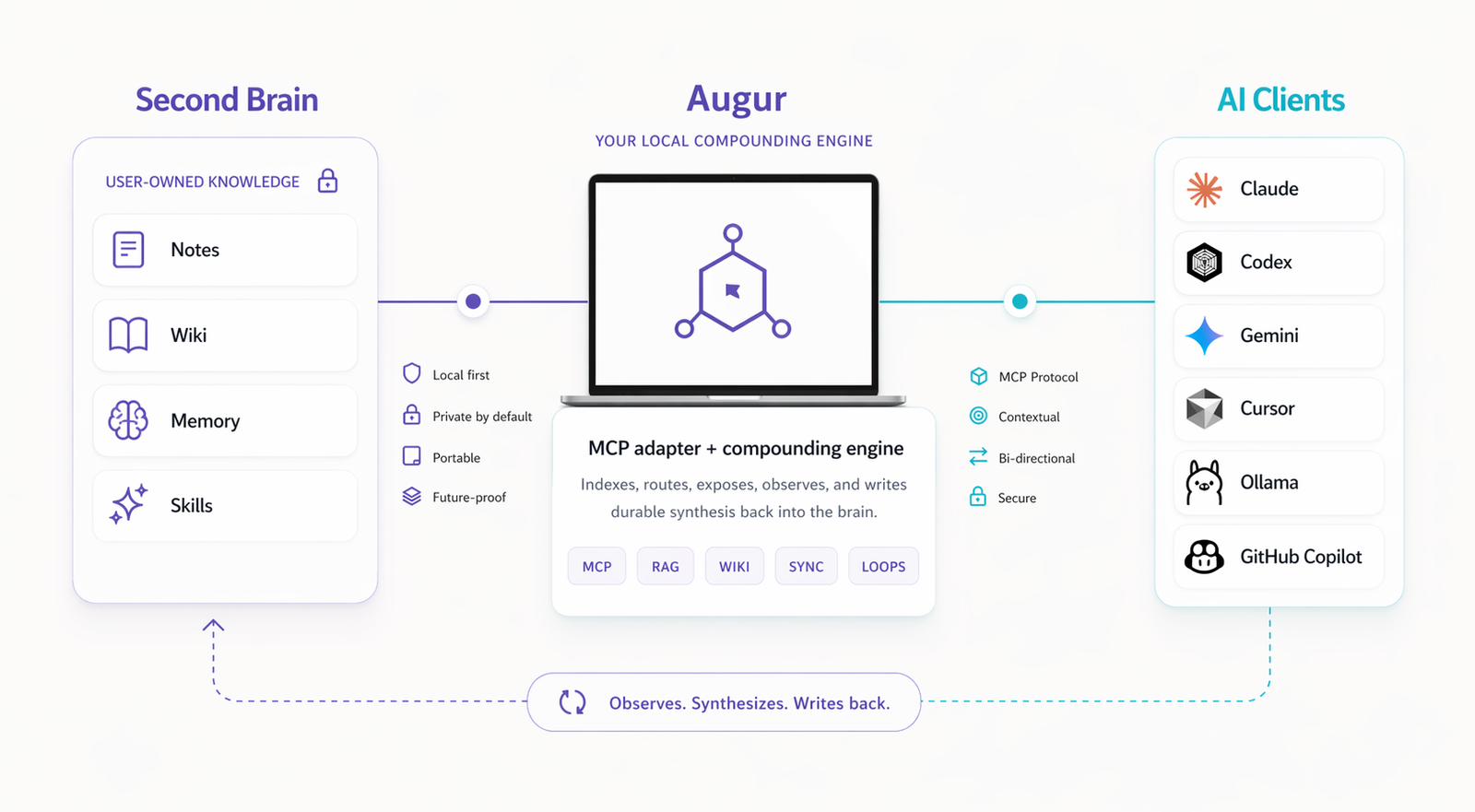

Augur connects your brain to AI clients, compounds useful work back into it, and keeps the system inspectable.

Local-first. Durable. Inspectable.

The model

Your brain stays in files you own. Augur turns it into tools, context, and client surfaces that can compound over time.

Not just a bridge. Augur connects the tools you use and writes useful outcomes back into the brain.

What Augur adds

Start with a vault or plain-folder brain. Augur exposes it to the AI clients you choose and compounds useful work back into it.

Turn notes, sources, and sessions into linked knowledge that gets better over time.

Keep routing, summaries, and generated surfaces maintained without manual cleanup.

Inspect what exists, what is running, and what is compounding.

Inspect local skills, commands, client surfaces, documents, and compounding state from one place.

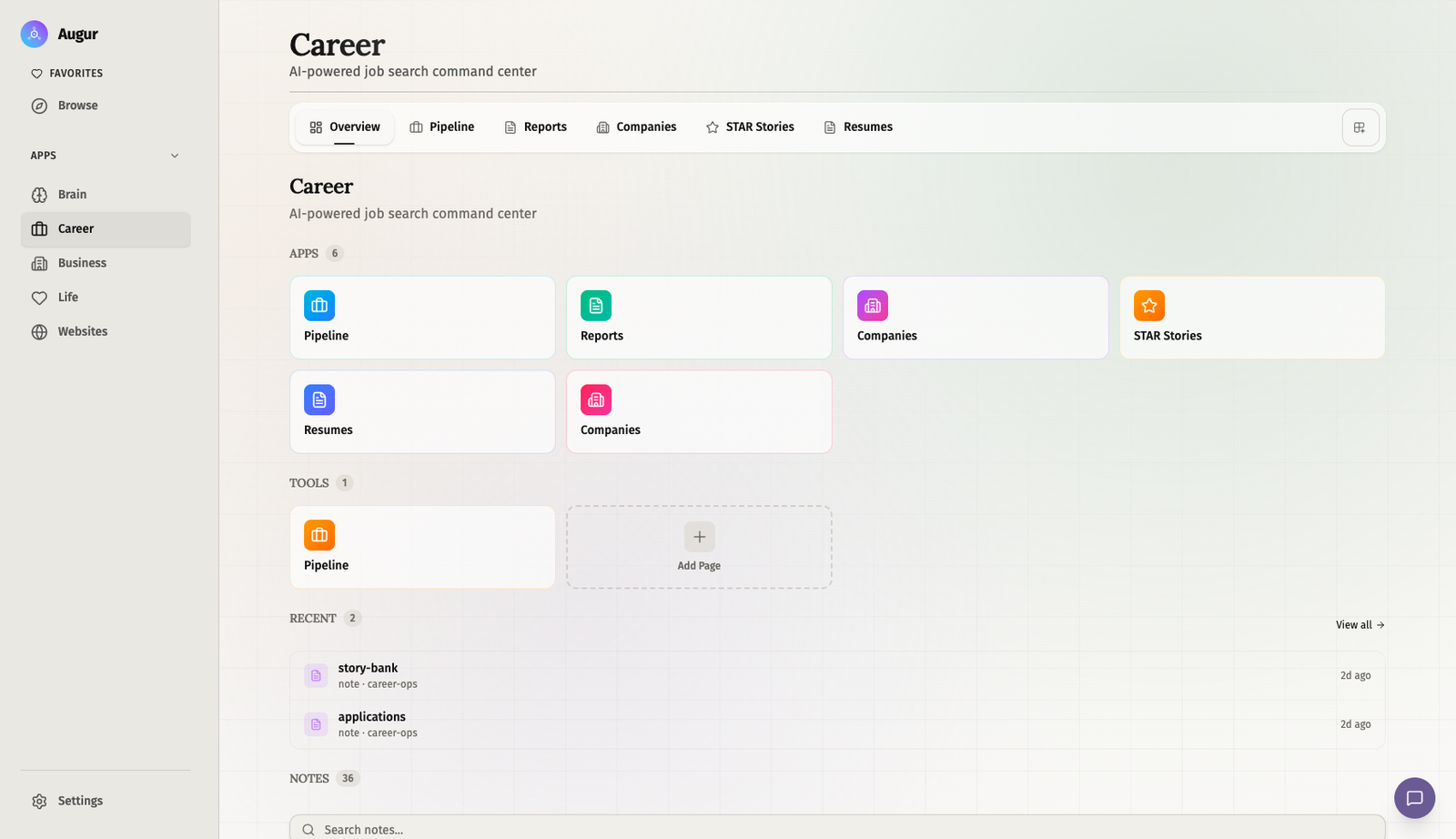

Career is an example of a user-built app running on top of the same local second brain.

FAQ

For organizations

The closed-source central tier for organizations whose teams already run Augur locally. Augur Enterprise lets IT manage the fleet of runtimes, set policies across the org, and compound nightly into shared org intelligence — built from how people actually work, not from what gets uploaded to SharePoint. The opposite of top-down copilots like Glean or Copilot.

Inventory, control, and policy across every employee laptop running Augur. IT keeps audit, sandboxing, and inspection.

Per-laptop work compounds into a shared org wiki — readable by every employee through the runtime they already have.

Top-down copilots scrape what's been uploaded to a corporate document repository. Augur Enterprise builds intelligence from real work — no upload required.

Start here

Inspect the architecture now.

Join the community release next.

The public roadmap shows what's shipped, what's in flight, and what's next. MVP release lands May 2026.

The first community release lands May 2026. Join the waitlist and get the launch update when it opens.

The roadmap shows what's shipped, what's in flight, and what's next. The architecture overview shows how the layers fit together.

Inspect the architecture, read the code, and contribute. The runtime, skills, and dashboard all live on GitHub.